AI has crossed a threshold. It’s no longer a set of experiments running in a lab environment where delays are inconvenient but acceptable. For many organizations, AI is becoming a production capability tied directly to revenue, customer experience, and operational risk delivered continuously through inference, RAG, and increasingly agentic systems.

That shift has created a new operating model: the AI Factory. The reason the metaphor fits is simple: factories are designed around throughput, predictability, and economics. They convert inputs into output continuously, under load, and under change. AI factories do the same, converting data and electricity into tokens, decisions, and outcomes.

AI factories are also capital intensive. Once you deploy hundreds or thousands of GPUs, the biggest cost is not a “storage line item.” It’s fully burdened compute sitting in a data center that is powered, cooled, and depreciating. In that environment, the most expensive failure mode is simple:

idle time.

And idle time is rarely caused by “slow GPUs.” It is most often caused by the system around the GPUs failing to keep them productive at scale, especially as workloads diversify and concurrency becomes the default.

“In AI factories, idle time is the most expensive failure mode because it turns paid-for compute into millions of dollars in stranded capital.”

AI Factories Are Scaling Fast, but the Economics Are Unforgiving

The AI industry is now optimizing for token economics. That’s why “cost per token” has become a more meaningful scoreboard than peak performance claims. If your AI factory is producing fewer tokens per GPU hour than expected, the outcome isn’t just slower progress, it is structurally higher cost, and often a lower ability to scale sustainably.

What makes this hard is that idle time accumulates quietly. An AI factory can appear to be “working” while still underproducing. Utilization drifts down. Latency becomes unpredictable. Pipelines grow fragile. The organization responds by adding more compute and more layers of complexity, raising costs further while never addressing the root cause.

The Challenges of AI Today. In many AI factories, GPUs wait on data delivery and pipeline stalls; a material fraction of compute can go unused when the stack can’t sustain throughput under production conditions.

This is why AI factory economics are not primarily a compute problem. They are a data flow problem.

Cost per Token Is the Right Metric, yet AI Factories Still Underperform

In a recent NVIDIA blog (Rethinking AI TCO: Why Cost per Token is the Only Metric that Matters), NVIDIA states that cost per token is the metric that matters because it reflects what a system produces in real environments, not just theoretical peak performance. I agree with the premise: tokens are output, and output economics should drive infrastructure decisions.

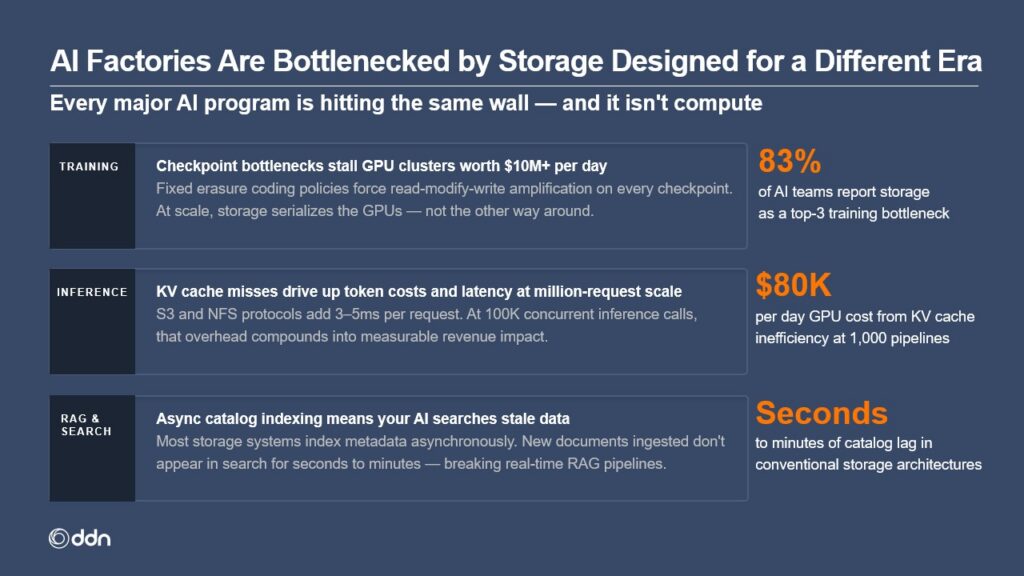

Where AI factories struggle is exactly what that framing implies: token economics are a system property. The denominator (tokens produced) collapses when any layer becomes the bottleneck. In real deployments, the bottleneck is frequently the data path: data movement, metadata behavior, checkpoint/restart overhead, retrieval concurrency, or pipeline orchestration friction.

To make that concrete, it helps to simplify AI factory workloads into repeatable patterns. Almost every production environment is some combination of four.

The four AI factory workflow patterns that matter. Training, inference, RAG, and search cover most production AI factories. Each pattern stresses the data path differently and each can strand GPUs when the data platform behaves inconsistently under load.

When these patterns run concurrently across teams, pipelines, and tenants the failure modes become predictable: GPU starvation, checkpoint stalls, metadata bottlenecks, uncontrolled data growth, and cost volatility. None of these problems are surprising. What’s surprising is how often they are treated as afterthoughts.

“Token economics are a system property. The denominator collapses the moment GPUs start waiting on data.”

Choose the Data Platform Before You Buy and Implement the AI Factory

The most common sequencing error I see is selecting the GPU platform first and treating the data layer as a capacity exercise to solve later. That approach worked in earlier eras because storage could often sit behind the compute. In modern AI factories, the data layer is in the critical path, especially for inference and RAG.

If you wait until after deployment to discover that metadata behavior becomes non-deterministic under concurrency, or that checkpointing turns routine operations into hours-long stalls, you’ve already baked idle time into your AI factory’s economics.

A production AI data platform must be evaluated on manufacturing-grade requirements:

- Sustained throughput under contention (not a single peak benchmark)

- Deterministic metadata performance at scale (predictable behavior with millions/billions of objects)

- High concurrency at inference scale (requests and parallel clients, not one job in isolation)

- Checkpoint behavior that doesn’t stall training (because failures and retries are normal at scale)

- Predictable performance as data grows (day 1 and day 1,000 should look the same)

If you validate these properties early, you avoid the hidden tax of underproduction. If you validate them late, you end up paying for idle time as a permanent line item.

Why DDN Is the Data Platform To Build AI Factories On

At DDN, our goal is not to win isolated benchmarks. It is to keep the most expensive asset in the AI factory (NVIDIA GPUs) consistently productive across the full lifecycle: training, fine-tuning, retrieval, inference, and the operational realities that come with running these systems at scale.

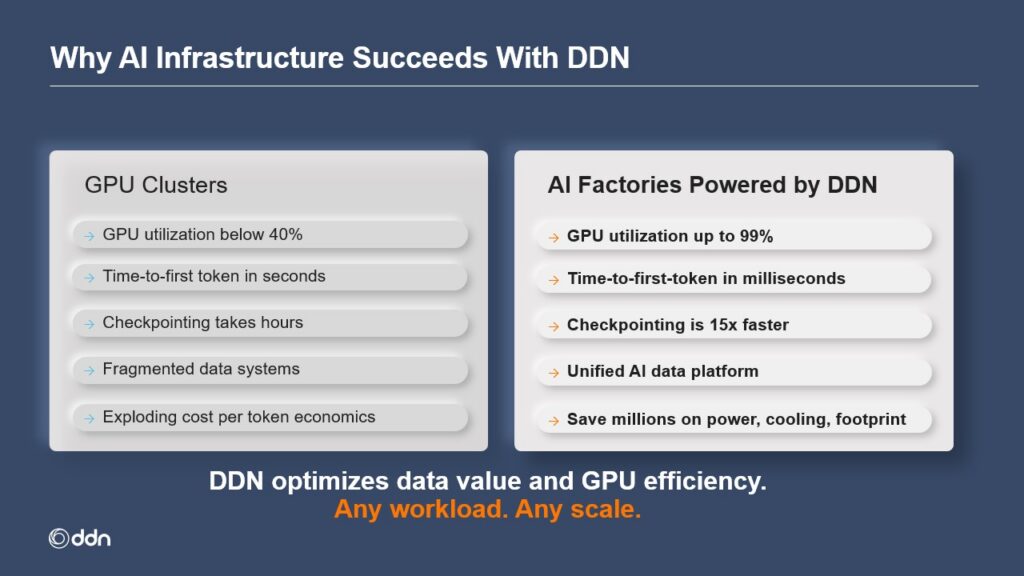

The difference between “a GPU cluster” and “an AI factory” becomes obvious when you compare outcomes side-by-side.

GPU cluster vs. AI factory powered by DDN. GPU clusters often degrade from acceptable lab behavior to poor production behavior: lower utilization, higher time-to-first-token, slow checkpointing, and fragmented data systems. AI factories powered by DDN are engineered to behave predictably under real load, so compute produces output instead of waiting.

What customers achieve with DDN in production:

- Up to 99% sustained GPU utilization

- 10–20× more inference tokens per GPU

- 15× faster checkpointing

These outcomes are not about tuning one job. They come from engineering the data path to behave deterministically under concurrency: the data platform must sustain throughput, keep metadata predictable at scale, and avoid “pipeline stalls” that turn compute into idle time.

This is why data platform selection must happen before the AI factory build becomes fixed: once idle time becomes structural, you pay for it forever: in capex, power and cooling, and time-to-outcome.

“Customers aren’t limited by model ideas. They’re limited by infrastructure that can’t keep up, especially the data path under concurrency.”

Call to Action: Three Next Steps To Reduce Idle GPUs This Quarter

If you’re building or scaling an AI factory this year, here are three practical actions that improve outcomes quickly:

Measure the Idle Tax

Baseline effective GPU utilization by workload (training vs inference vs RAG) and quantify how much time is spent waiting on data movement, metadata operations, or pipeline contention. Treat the gap as stranded capital, not “performance tuning.”

Map Your Environment to Real Factory Patterns

Use the four workflow patterns (training, inference, RAG, search) to test your pipeline under realistic concurrency, not a single job in isolation.

Book an AI Factory Data Platform Review with DDN

Bring your workload mix, concurrency targets, checkpoint behavior, and growth plan. DDN will help identify the most likely failure modes and the highest-leverage fixes so GPUs spend time producing tokens, not waiting on the pipeline.

In summary:

- Cost per token collapses when GPUs wait on data.

- Mixed workloads (training + inference + RAG + search) make data determinism a requirement, not a nice-to-have.

- Customers running DDN in production achieve:

- Up to 99% sustained GPU utilization

- 10–20× more inference tokens per GPU

- Up to 70% lower power and cooling costs

Pick the data platform first. It determines whether your AI factory produces tokens to advance your business goals… or causes millions in stranded capital due to GPU idle time.