By Jean-Thomas Acquaviva, Senior Researcher, Pre-Sales Engineering, DDN

Metadata To Steer Computing Power Right to the Target

Analyzing satellite imagery for crop health and yield prediction is critical for global agriculture, but the massive scale of data makes traditional AI processing slow and expensive. Hydrosat and the DaFab project have achieved a performance breakthrough by shifting from resource-intensive, pixel-by-pixel processing to an intelligent, geometry-driven approach.

Hydrosat’s unique AI-pipeline for crop detection and yield prediction leads the market for the quality of its accuracy. Today the Hydrosat computation pipeline is a sophisticated chain of AI-Model working pixel-per-pixel on satellite images. Under the DaFab project, satellite images are AI-enhanced by metadata. This metadata accurately describes the geometry of all farm plots on the images.

The Hydrosat pipeline now uses this metadata to guide computation, taking the entire agricultural parcel as input instead of a single pixel. Comparative tests were conducted on Luxembourg’s MeluXina high-performance computing platform to assess realistic deployment scalability using CPU-based execution. The evaluation focused on three primary metrics: runtime, memory usage, and storage requirements.

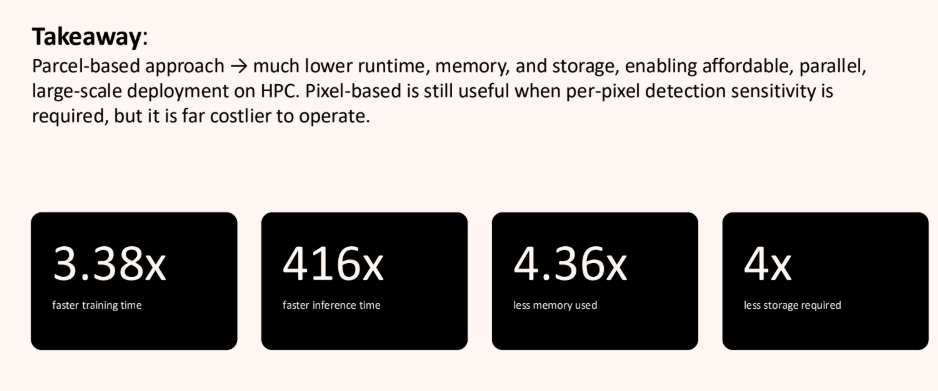

Injecting the metadata in Hydrosat’s pipelines delivers major acceleration further pushing Hydrosat leadership on the market.

Train 3x Faster

Building the model is the most computationally heavy step, as it requires significant hardware resources. The parcel-based approach is drastically faster than the pixel-based workflow across all tested configurations. At an 8-thread configuration, the parcel model trained in approximately 1,600 seconds, whereas the pixel-based model took nearly 4,800 seconds-a 66% reduction in training time. In other words, training a model with metadata is 3x faster than relying solely on data.

400x Faster Inference

If training is the most computationally intensive task, it is still a one-time effort. The real business value comes from inference. Metadata injection offers an unprecedented acceleration of the inference. The parcel-level inference completed in under 2.5 seconds using 16 threads. In stark contrast, pixel-level inference averaged 936.2 seconds under the same configuration. This brings dramatic increases of more than 400 times the value generation of the Hydrosat product. In our AI token-driven economy, these two orders of magnitude of improvement allow running inference at scale with massive return on investment and unlock new markets such as infer-on your-laptop.

Improved Platform Efficiency

Using metadata is essentially a more intelligent approach to solving the problem, whereas a brute-force strategy – such as adding more hardware – yields diminishing returns in speed. Throwing more hardware does not improve the situation, for both methods, scaling beyond 16 threads does not provide any further speed improvements. Hardware scalability has its limits, for instance, even on a supercomputer-class node the pixel-based model showed an Out-of-Memory (OOM) error when attempting inference with 64 threads.

6x More Efficient Peak Memory Usage

Memory capacity is a critical constraint for concurrent processing in HPC environments. Specifically with the current chip crisis, memory is the key resource to optimize.

In this respect, Parcel Workflow required a peak of 39 GB for data preprocessing, 28 GB for training, and 39 GB for inference. Pixel Workflow demands considerably more, utilizing 115 GB for preprocessing, 104 GB for training, and peaking at 250 GB during inference.

The parcel approach has a direct operational impact; with its lightweight memory footprint, the parcel approach allows approximately 10 to 12 concurrent tasks to run on a single 512 GB node. The heavy memory demands of the pixel approach restrict the system to running only a single task per node.

5x Storage Cost Reduction

Metadata acts as an additional semantic layer, allowing to extract more information out of the same volume of data, leading to smarter and smaller AI models. For the pixel-based workflow, the serialized model files alone take up 164 GB. On the contrary, the parcel-based approach has a highly efficient storage footprint totaling approximately 30 GB. This consists mostly of the trained model files (~25.5 GB), alongside small boundary files (4.6 GB) and training datasets (0.2 GB).

Overall, metadata reduced the size of the models by a factor of 5. In the competitive Earth Observation market, small, smarter, more agile models are making the difference.

Conclusion

Metadata is confirmed as the key enabler for performance in computation-heavy AI pipelines, transforming the Hydrosat workflow from a pixel-by-pixel brute force method to an intelligent, parcel-based approach that uses geometry data to steer computing power. This metadata-driven strategy delivers massive performance breakthroughs, including a dramatic acceleration in inference and faster model training time. Critically, it also ensures operational efficiency, with an improved return of investment for the infrastructure. By drastically reducing runtime and resource demands, metadata is the fundamental factor that enables operational scalability and cost reduction for large-scale deployment on HPC infrastructures like MeluXina

About Hydrosat

Hydrosat is the leading deep tech company leveraging thermal imagery to measure water stress and increase agricultural productivity. Hydrosat currently monitors millions of hectares for customers such as NOAA, NRO, Bayer, SupPlant, and Nutradrip, who trust the company’s high-resolution, timely satellite thermal imagery to deliver advanced analytics that convey precise crop yield forecasts and improved irrigation tools to financial and agribusiness customers around the globe.

For more information, please visit www.hydrosat.com

Hydrosat Press Contact:

Christine Vlachou

Sr. Marketing & Communications Manager

marketing@hydrosat.com

About DaFab

DaFab is a Horizon Europe-funded project designed to maximize the value of massive Copernicus Earth Observation data. It combines Artificial Intelligence, High-Performance Computing, and cloud infrastructures to efficiently process these immense datasets.

A key technical achievement is its Unified Metadata Catalog, which extends CERN’s Rucio system to enable advanced, semantic data searches. Ultimately, the project accelerates the delivery of actionable environmental insights for critical applications like flood detection and precision agriculture. The DaFab project is led by DDN and the key technological partners are FORTH, CERN, GCORE, ECMWF, LuxProvide, Thales Alenia Space, Joseph Stefan Institute.

About DDN

DDN is the coordinator of the DaFab project, where it injects its unique expertise in AI data management. DDN is the world’s leading provider of AI data storage and data management platforms, powering over 20 years of innovation across HPC, enterprise, and the largest AI deployments on Earth. With its EXA, Infinia, and intelligent data management platforms, DDN delivers unmatched performance, scale, and business value for customers building next-generation AI factories, hyperscale clouds, and Sovereign AI initiatives. DDN is the trusted partner for thousands of the world’s most data-intensive organizations, including the leading national labs, research institutions, enterprises, hyperscalers, financial firms, and autonomous vehicle innovators. For more information, visit www.ddn.com.