AI projects are being conceived for every possible market, and the diversity of approaches to delivering AI-infused applications is extraordinary. Two core elements are at the center of many of these projects: NVIDIA accelerated computing and an incessant demand for data. At NVIDIA GTC, a global AI conference running this week in San Jose, Calif., we at DDN are demonstrating new products, like our AI400X2 Turbo, and announcing an expansion of certifications and qualifications with NVIDIA reference architectures, reference designs and products. These announcements represent a commitment to meeting the range of needs within our customer markets and our continued determination to deliver maximum value to our customers’ accelerated computing infrastructure.

DDN AI400X2 with NVIDIA OVX Reference Architecture

Coupling DDN AI solutions with NVIDIA-Certified OVX servers and high-speed NVIDIA networking enables you to get the same super-efficient GPU utilization and storage simplicity as you would with other supercomputing-oriented solutions.

The NVIDIA OVX reference architecture is aimed at enterprise customers running generative AI, inference, training and industrial digitalization applications. OVX systems are composed of high-performance infrastructure that combines NVIDIA L40S GPUs with NVIDIA Quantum-2 InfiniBand or NVIDIA Spectrum-X Ethernet and NVIDIA BlueField-3 networking, as well as NVIDIA AI Enterprise software for AI workloads and the NVIDIA Omniverse platform for graphics-intensive workloads.

DDN is proud to be one of the first to join the new NVIDIA OVX storage validation program. To achieve this validation, DDN ran a suite of tests measuring storage performance and input/out scaling across multiple parameters that represent the demanding requirements of various AI workloads. The achieved validation offers enterprises a standardized process to help ensure they are pairing the right shared storage appliances with their NVIDIA-Certified OVX servers and high-speed NVIDIA networking.

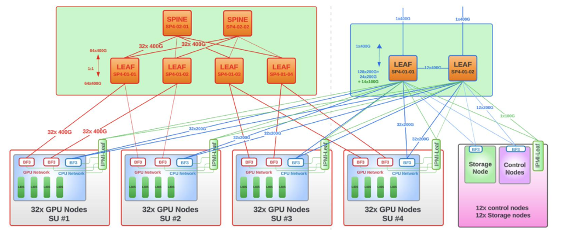

Heterogenous and often incompatible data sources across an organization make it difficult to connect the dots and get the most valuable business insights. To speed up your ROI, you need a modern data platform that empowers AI application development with unprecedented speed and agility, expands the impact of AI across the entire organization, and lowers overall costs. DDN A³I solutions offer a differentiated and compelling approach to AI infrastructure with the high-speed networking and parallel storage access required to feed a large cluster of AI nodes.

AI400X2-QLC Certified with NVIDIA DGX BasePOD

We released our QLC versions of the AI400X2 storage platform last year and have completed AI400X2-QLC certification for the NVIDIA DGX BasePOD reference architecture. While our TLC-based AI400X2 systems are ideal for enterprise-scale NVIDIA DGX SuperPOD solutions requiring peak performance and low-power efficiency, our QLC-backed systems supply versatility and larger capacity for a variety of workloads.

DDN’s architectural approach to QLC unlocks the capabilities of this class of flash while also maintaining superior longevity of the devices. By writing data directly to QLC instead of supplying a caching layer of memory in front of the media, DDN ensures maximum value from a customer’s investments and a simple approach when it comes time to grow capacity.

DDN’s NVIDIA BlueField 3 SuperNIC-based Solutions

One part of our support for the NVIDIA Spectrum-X Ethernet infrastructure is due to the Integration of NVIDIA BlueField-3 into our EXAScaler 6-based platforms. The NVIDIA Spectrum-X networking platform, which uses NVIDIA Spectrum-4 switches and BlueField-3 SuperNICs, is the first Ethernet platform designed specifically to improve the performance and efficiency of Ethernet-based AI clouds. Optimization is crucial for every part of the AI stack, but storage often sees the most intensive IO bottlenecks because thousands of compute clients are often hitting a limited number of storage nodes simultaneously. By leveraging NVIDIA BlueField-3 and the RoCE (RDMA over Converged Ethernet) protocol, DDN can deliver the same high-performance efficiency to Ethernet-based networks as we do to NVIDIA Quantum InfiniBand-based infrastructure.

For more information, see our recent press release.

DDN and Ethernet-Powered Performance in the Data Center

Recent testing has demonstrated that optimizations incorporated into EXAScaler 6.2 have brought performance parity to Ethernet nearly equal to our already-leading InfiniBand-powered throughput. Now, customers who prefer Ethernet networking have access to incredible performance for their scalable AI workloads. Especially relevant is a 6X increase in write performance made available through enhancements to the EXAScaler software. Enterprise AI storage solutions need well-balanced performance across all I/O characteristics, and EXAScaler systems can now deliver comprehensive Ethernet performance to complement customer AI clouds.

Support for NVIDIA Grace CPU Superchip

Testing has also demonstrated efficiency for CPU processors when using DDN’s A³I systems to feed NVIDIA Grace CPU Superchip-based systems. Compared to existing x86 clients, we observed a 2x increase in read performance and a 35% increase in write performance due to the faster Grace Superchips. As customers continue to increase the complexity and size of their AI models, the performance of the CPU and GPU in their data center must be complemented by equally efficient shared storage. Whether for running simulation, data analytics or AI workloads, the combination of NVIDIA Grace CPU Superchip systems and DDN’s A3I storage are an ideal complement for data-intensive workloads.

Come See Us at NVIDIA GTC

Are you at NVIDIA GTC this week? If so, come by booth #816 in the main hall and talk to one of our AI storage experts.

Not at GTC? You can still register for the virtual conference for free and watch many sessions on demand.

See all of our GTC related announcements on our news page.